Four weeks from now, the eighth edition of the Gerrit User Summit will open its door at Google HQ in Mountain View – CA, 12th-13th of November 2016.

It has been a long journey since the first GitTogether in 2008, and after the split between the Git[Hub Universe] summit and the traditional “unconference” style Gerrit event at Google’s, things have changed quite a lot. While Gerrit remained a 100% OpenSource user-centric project, GitHub has attracted $350M in VC, and they have been losing traction over the years to join the unconference-style events.

What’s new this year?

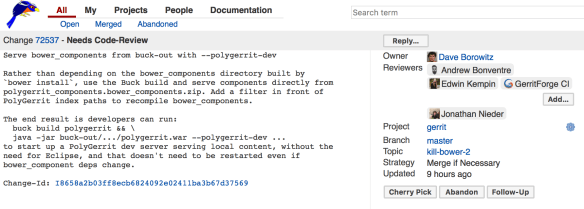

For the first time, the proposals of talks to the Gerrit User Summit are submitted in Gerrit directly (yeah!) on the summit/2016 repository.

The list of currently approved talks is available by searching for “status:merged project:summit/2016” (https://gerrit-review.googlesource.com/#/q/status:merged+project:summit/2016)

The talks awaiting review are under “status:open project:summit2016” (https://gerrit-review.googlesource.com/#/q/status:open+project:summit/2016)

How cool is that? I foresee already a Doodle plugin for Gerrit 😉

How to register for the User Summit?

Shawn Pearce has prepared a Registration Form for you to sign-up to the event:

https://goo.gl/forms/oeEnQweHl2noNSnn1

Once you access the Registration Form at the above URL, you need to sign-in with your Google Account credentials and then complete the following information:

– Your name

– Your Organisation

– Your previous attendance to the user summit

– Any dietary restrictions

The User Summit is FREE for EVERYONE, including novice users of Git and Gerrit Code Review, but you would need to register beforehand.

The Summit is a unique opportunity to learn about Gerrit new feature, contribute to the product roadmap with your needs and requirements and, most of all, network with other users to learn new use-cases where Gerrit can be very helpful.

How to submit my talk proposal?

Well, you need to demonstrate a good understanding and use of Gerrit Code Review if you want to teach and talk to other people about it! At the end of the day, if you want to talk about Gerrit you should be able to clone a repository and submit a patch to a project 🙂

If you need just a little help … see my “Diffy super super talk” example:

$ git clone https://gerrit.googlesource.com/summit/2016 && (cd 2016 && curl -Lo `git rev-parse --git-dir`/hooks/commit-msg https://gerrit-review.googlesource.com/tools/hooks/commit-msg ; chmod +x `git rev-parse --git-dir`/hooks/commit-msg) $ cd 2016 $ cat - > sessions/my-amazing-talk.md # My amazing talk at Gerrit User Summit Hi folks, this is my super-duper-talk. You should be interested in it as I will unleash the dark force of Code Review Diffy Kung Fu Review Cuckoo. *Diffy, Birds & CO. Inc.* ^D $ git add sessions/my-amazing-talk.md && git commit -m "Diffy super-duper talk" $ git push origin HEAD:refs/for/master

Talks highlights.

There are already some fascinating talks submitted and approved and more will undoubtedly come in the next couple of weeks. We will start sharing some highlights of what’s happening at the conference. Here is the overview of the first talks.

What’s new in Gerrit 2.12 and 2.13

Two major versions of Gerrit have been released since the last summit in 2015, and they contain significant improvements to the platform:

- Topic submission workflow – aka Git commits across repositories (v2.12).

Group multiple changes in a “topic” and having them merged as a whole, even across multiple repositories, in a single submit operation. - GPG signed pushed verification (v2.12).

Allows people to upload their GPG public keys into Gerrit and have them used to verify Git signed commits. - Large File Storage support (Git LFS) (v2.13).

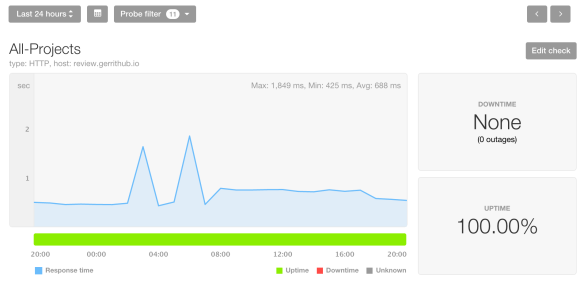

Gerrit finally supports the automatic management of large files outside the Git repository. The feature is fully pluggable and exposed via plugins. Amazon S3 and Local file system support are available at the moment, but more plugins are here to come on this feature. - Gerrit metrics (v2.13).

Expose the internal metrics to external consumers. The feature is exposed for plugins to gather this data and send to external systems for analysis and visualization purposes. Graphite, ElasticSearch, and JMX plugins are available. - Hooks plugin (v2.13).

Finally, the Gerrit hooks mechanism have been entirely externalized and implemented in a pluggable way. The legacy hooks have become a core plugin. However, you can now leverage the new extension to develop a new-generation of hooks by leveraging the new extension points provided. - New HTML5 UX with WebComponents – PolyGerrit preview (v2.13).

The next generation of Gerrit UX based on Polymer Web components is available. Even though not complete, offers a sneak preview of what the new interface looks like and, if you like it as-is and is good enough for your use-cases, you can enable and start using it already. Both GWT and Polymer-based UX are using the same REST API, and thus the changes generated and reviewed with them are 100% interoperable.

There is more to come.

In the next few days we will keep on publishing the highlights of the topics coming at the Gerrit User Summit this year, stay tuned and REGISTER NOW at:

https://goo.gl/forms/oeEnQweHl2noNSnn1

The GerritForge Team.